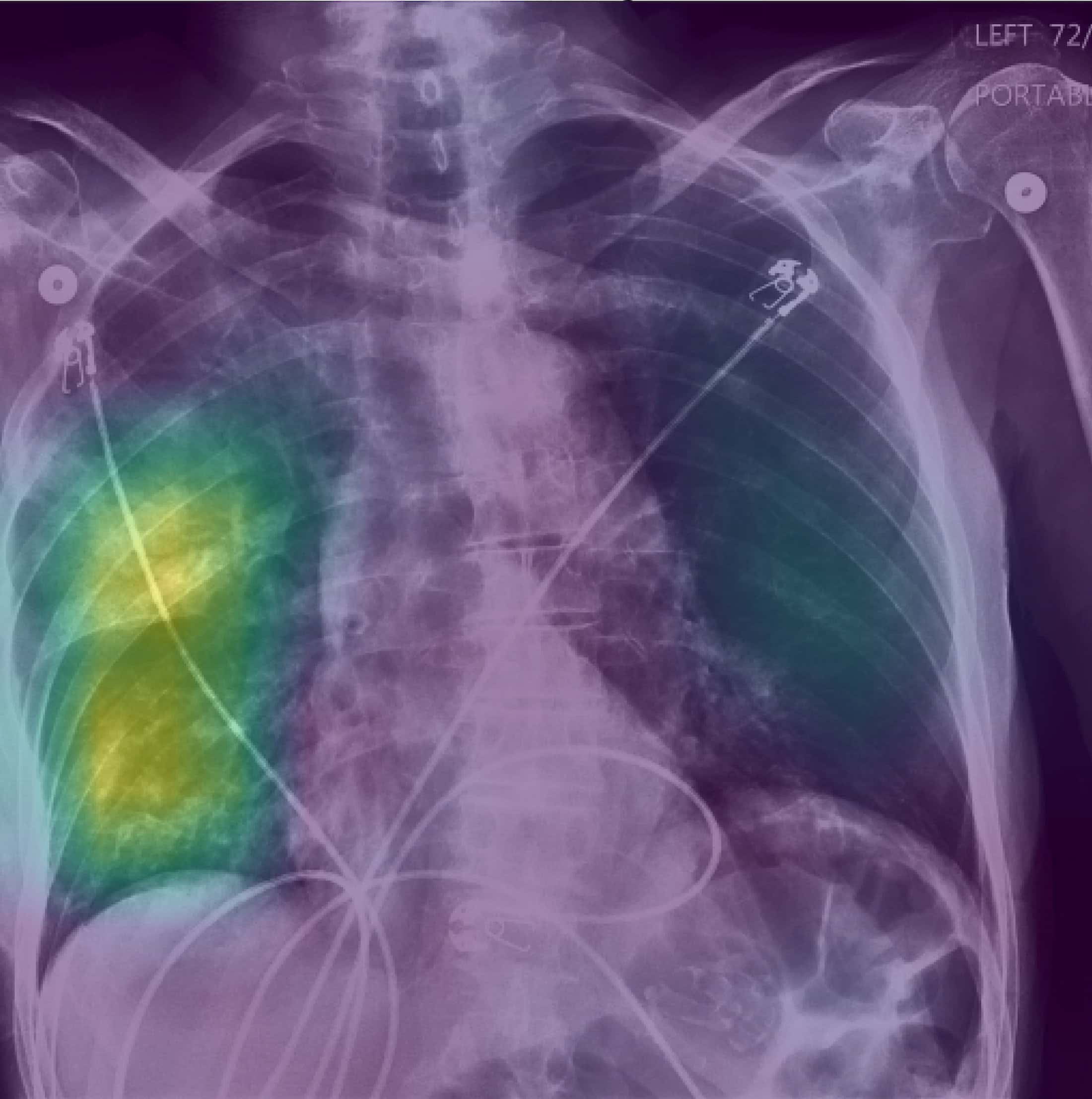

Clinicians could be fooled by biased AI, despite explanations::Regulators pinned their hopes on clinicians being able to spot flaws in explanations of an AI model’s logic, but a study suggests this isn’t a safe approach.

Just wait until one of the clinicians tries to disagree with the AI and they get fired by a hospital administrator who trusts the technology more than the people.

Don’t worry, it won’t ever get that far… Insurance AI would have denied the imaging request.

I would laugh if only this wasn’t a terrifying plausibility. Imagine you have pneumonia and need a chest x-ray to confirm that was denied because you were out of network. Imagine there is no recourse because all customer service has been outsourcedto a very friendly chat bot who will kindly tell you ‘DENIED’ after arguing with it for 30 minutes.

You don’t need to imagine: https://www.cbsnews.com/amp/news/health-insurance-humana-united-health-ai-algorithm/

I just don’t think that’s how this will work in practice.

What I expect is the AI will provide several possible explanations for the test results. Most of them will be wrong, one might be correct. If the clinician can think of more that the AI missed, those can simply also be added to the list of things to consider.

Human clinicians are surprisingly bad at diagnosing problems - as soon as we think of something that fits the symptoms, we struggle to think of other problems that would also fit. A lot of time (sometimes years) is often wasted proving the first diagnosis was wrong before anyone comes up with an alternative hypothesis. AI can do a much better job in that specific scenario… but it doesn’t mean it can replace humans entirely.