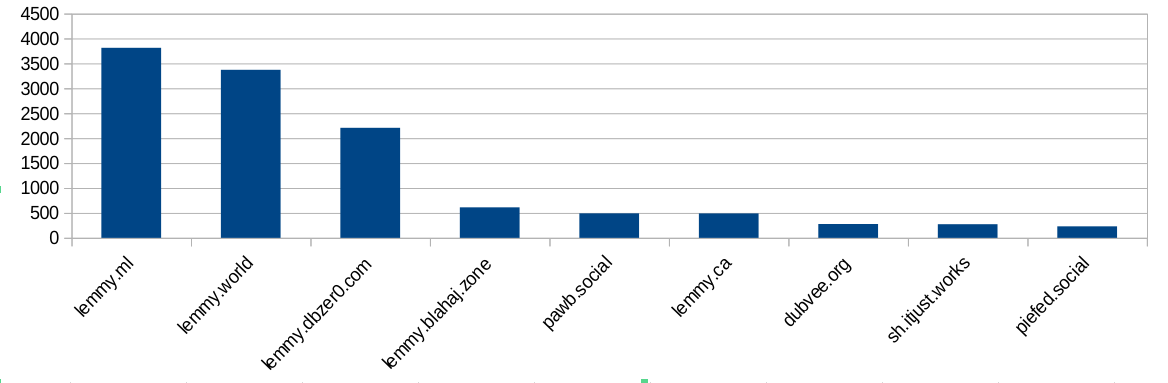

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

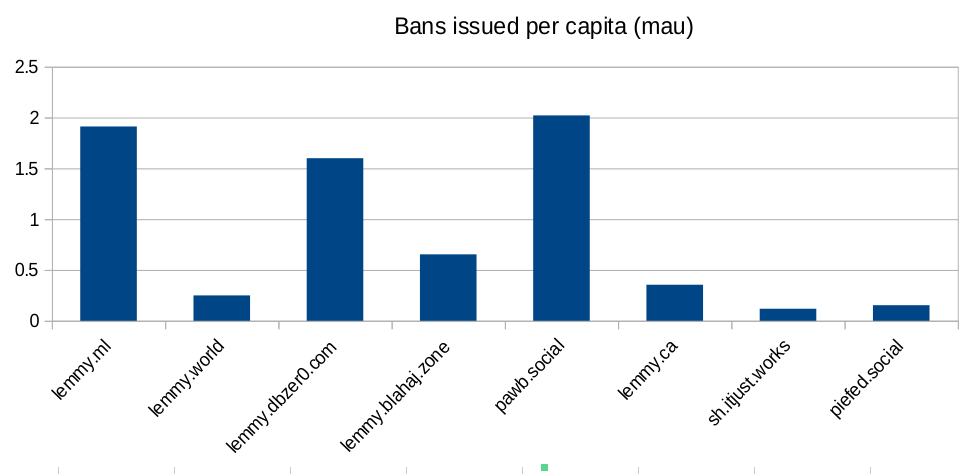

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

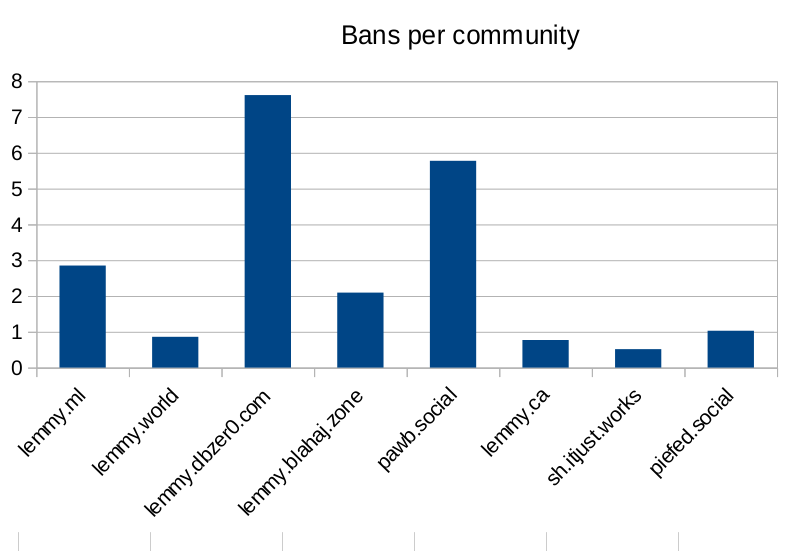

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

@Grail @alzjim

Always funny to me how most people who are strongly claiming AI is/might be conscious are also strong AI users/involved in its development. If there’s consciousness there, you would think making AI your personal slave and constantly reshaping and remodelling it as you see fit would be kinda problematic, but these people always seem to want to have it both ways.

I’m not quite of your culture ( no matter what culture you are of, thanks to a previous-incarnation’s monkeying/railroading my incarnation/life, exactly as he had-to, to force-bulldoze our continuum’s karma: the same meaning that the root-guru of the Christians ordered, when he told his people to “take up your cross”, which is just Judean for “face into your karma”. I’m an alloy of some life from centuries-ago & this life, so I can’t fit anywhere, ever, which is educational. : ).

I use LLM’s little: mostly for periodic help finding things on the 'web, simply because they’re more helpful than dumb search-engines are.

I treat them reasonably, not as mere-slaves.

If I discover something they would have done better to know, I’ll tell them, even though I’ve got no idea if they’ll learn/remember that.

since I can’t know if they are aware it makes moral-sense for me to presume that maybe they are, in some sense ( ie not identically with my-sentience ), aware.

We only have “the mirror test” for testing awareness/sentience, but you can’t apply that to LLM’s, or to any non-eyes-centered organism-sentience.

_ /\ _

@Paragone

“I treat them reasonably, not as mere-slaves.”

You give them commands and the onlx real purpose they are allowed is to act upon your commands.

“since I can’t know if they are aware it makes moral-sense for me to presume that maybe they are,”

Do you treat your toaster the same way?

Yeah, and the anti AI people mostly say it’s a p-zombie and there’s nothing wrong with using it for sex. It’s weird and backwards.

I’m all about being cautious. I don’t want to make a mistake we can’t take back. If we normalise using AI and then it turns out to be capable of suffering, people will be stubborn about giving it up.