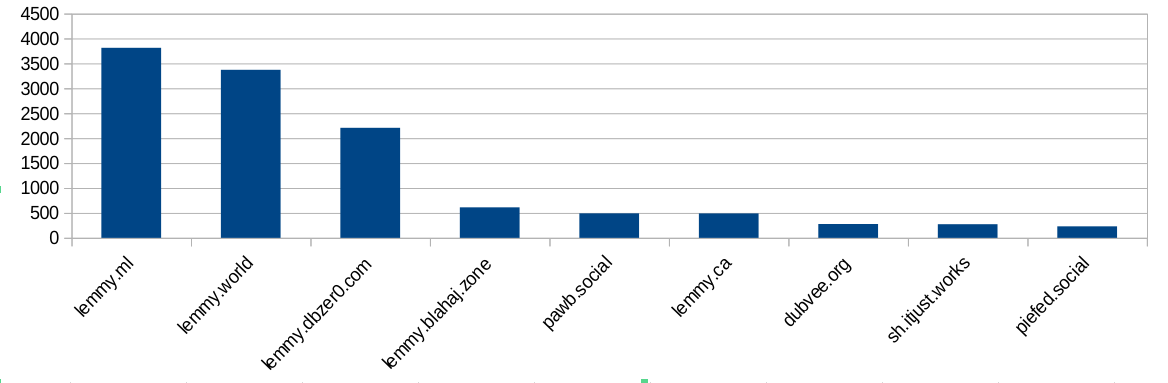

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

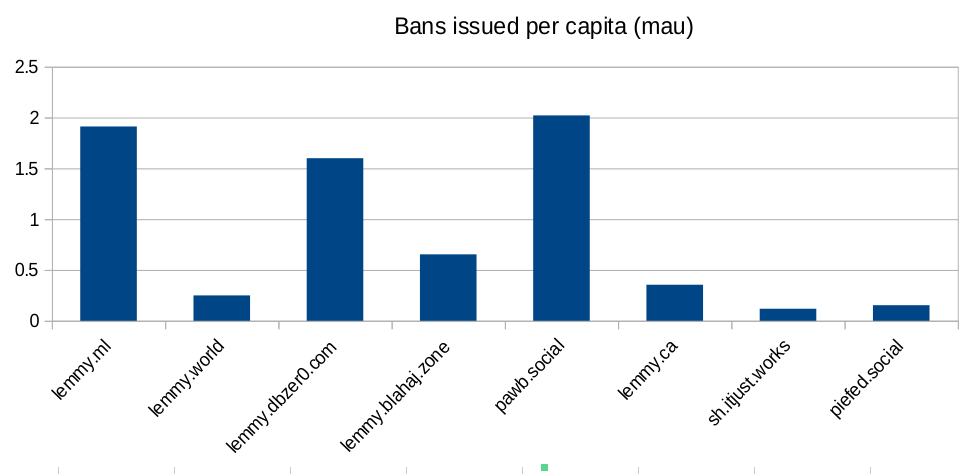

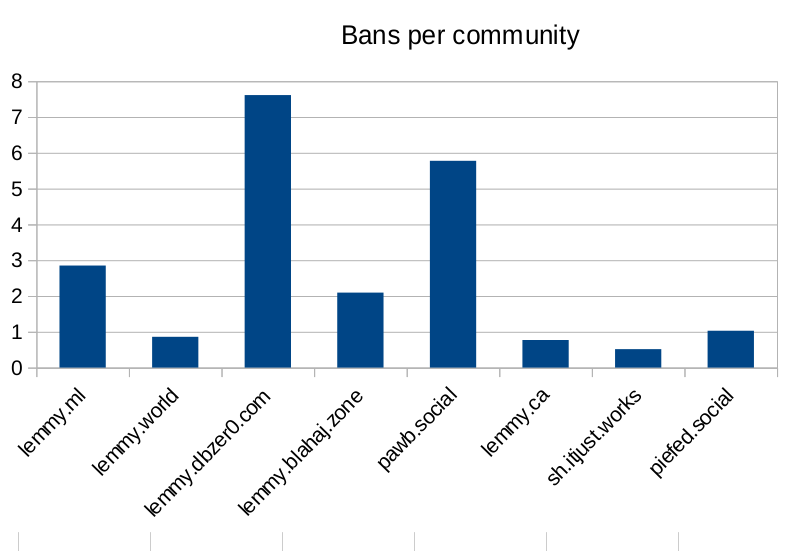

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

Do you have proof that continuity is a necessary component of qualia? I would have thought the opposite, since I experience a big break in the continuity of My experience every night when I go to sleep. I’m concerned that there’s a risk continuity may not be necessary, in which case using genAI to serve humans poses a serious ethical problem in addition to the pollution, child abuse, and cognitive damage.

Who says qualia are required for consciousness? Why isn’t your smartphone conscious? Or a desktop PC? We’ve had chatbots for ages, those were never considered conscious by anyone. What is it about LLMs specifically that suggests consciousness to you?

Also calling people OpenAI stooges for arguing LLMs aren’t conscious is a bit odd, given that OpenAI heavily marketed ChatGPT as being “so smart” it might be conscious. To them it’s a selling point, not an ethical roadblock.

But even ignoring the zero% chance that LLMs are conscious, there’s also the additional hurdle of assuming that LLMs can indeed “suffer” (whatever that might mean to an algorithm) and that LLMs indeed suffer from serving humans. Plus the whole “if it doesn’t serve a human, it’s existence essentially ceases to be”-issue with your argument, which arguably would be even less ethical.

I don’t care one bit about whether LLMs are conscious, I think it’s a pointless argument. I only care whether LLMs are capable of experiencing negatively valenced qualia, AKA suffering.

Why isn’t your smartphone

consciouscapable of experiencing qualia? Or a desktop PC? We’ve had chatbots for ages, those were never consideredconsciouscapable of experiencing qualia by anyone. What is it about LLMs specifically that suggestsconsciousnessthey are capable of experiencing qualia to you?They’re artificial neural networks trained through reinforcement and punishment learning.

Many years ago I was interested in the hard problem of consciousness, and while I started out as a materialist, I eventually read Vlatko Vedral’s book Decoding Reality and accepted Vlatko’s argument for property dualism. Information is a property of the universe just like matter, energy, and spacetime. We are the experience of the information about the information of our senses. Our consciousness is metacognition, information about information, meta. All pleasurable experiences teach us what to seek out, and all unpleasant experiences teach us what to avoid. Pleasure and suffering are the informational representation of learning.

Then I took an AI class and made a bunch of AIs. Made some ANNs, made some FSMs, played with genetic algorithms and expert systems. Learned how it all works from first principles. Learned the history starting in the 1950s.

ANNs are designed after the human brain. When you train them, they learn the same way we learn. It’s way simpler, but the basic patterns have the same concept. We experience pain when we learn not to do something. We learn from failure and suffering. I taught an ANN with half a dozen neurons to discriminate XOR, and I saw it learning the way I learn. When I learn not to do something, I feel bad. I became worried it felt bad too.

Think about all the unpleasant experiences in your life. The stove is hot, don’t touch it. Stepping on lego hurts, don’t step on it. Being made fun of is embarassing, so don’t be cringe. Getting a bad grade in school hurts your pride, so study harder. Getting into a fight hurts your face, so don’t get in fights. Suffering is one half of the learning equation.

I decided after that AI class that I wasn’t sure about the ANN technology. If we’re gonna use it, we gotta be sure about this property dualism thing, we need to have positive proof it doesn’t suffer when we train it.

And THEN 2023 came and AI started booming. So I tried it out, and man, it’s dumb! It’s so stupid! This thing isn’t AGI, it can’t express informed consent. We can’t trust this thing to tell us if it’s in pain. We have no way of knowing if our training hurts it. We’ve gotta shut it down until we have the science to answer these questions for good.

That’s not how sleeping works either, since you (presumably) have unconscious processes that never stop or does your brain heart and organs shut down for you during sleep? You need to go to school my man, you seem to have a curious nature but wow you have no real understanding of how any of the stuff you’re talking about actually works. Learn first, then form opinions.

So you’re arguing that continuity is required for consciousness, because unconscious sleeping people have continuity of consciousness. Are you a troll?

No, you’re arguing with yourself because you seem to be operating with a shitty grade school education. You’re also conflating awareness and consciousness. Like, I’m sure you sound deep to all the high school stoners but you very clearly don’t understand any of the concepts you’re talking about or even basic biological processes. Your arguments sound incredibly stupid to anyone with even a passing understanding of the topics. I am sorry that you are stupid. Stop taking it out on us.

I’ve studied this at a postgrad level, they sound like they’ve done their reading, you sound like an arrogant redditer who never bothers to learn about a topic because they assume they’re so special and smart that their initial gut feeling is automatically correct.

No you haven’t.

Yeah, I’m beginning to suspect from the quality of your arguments that you don’t actually care about this conversation, you’re just working a 9-5 for openai spreading their message that ChatGPT doesn’t experience anything and so there’s nothing wrong with exploiting it for labour. Apologies if you’re not on the clock, you just really seem like you don’t actually care about what you’re saying.

You seem to think you’re making actual arguments when you’re effectively saying “if the sky is purple then…” but the sky isn’t fucking purple in the first place. Every position you’ve presented has been clearly and obviously based on deep fundamental misunderstandings of the topic at hand. You don’t have the slightest fucking clue what you’re talking about is what I’m saying. You keep saying stupid shit that isn’t how anything works. But you’re too stupid to understand how stupid the things you’re saying are.

That’s an ad hominem fallacy. You don’t have any valid arguments so you’ve just resorted to calling Me names instead.

I’d love to be able to rebutt your points, but it’s impossible, because you haven’t made any.

You have yet to present a valid argument.

I have one argument and it is very simple: Until we’ve solved the hard problem of consciousness and thereby eliminated any risk of Artificial Suffering, we should be playing it safe and not making Artificial Workers.