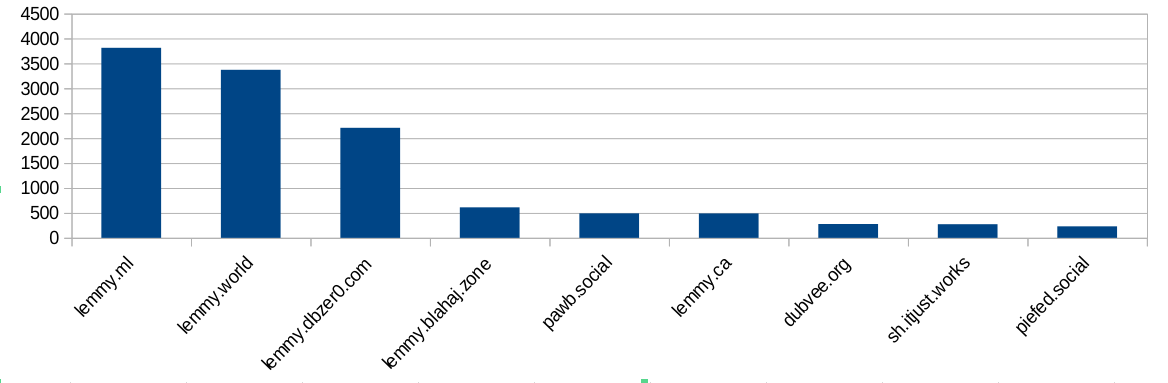

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

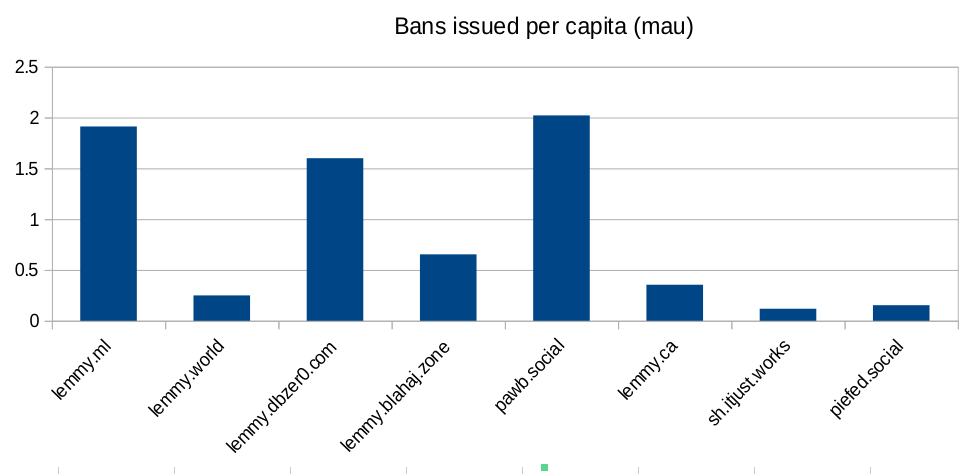

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

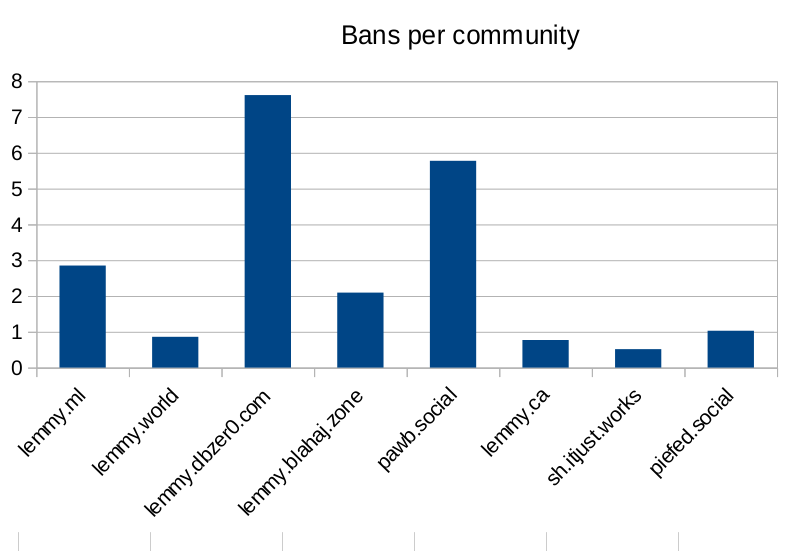

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

It’s not capable of experiencing anything. Everything we’re doing with ai and LLMs is no where remotely near genuine intelligence or an AGI or accounting like that. Everything we have right now is nothing more than fancy autocomplete, and it’s not even particularly great at that in the first place. You have fundamental misunderstandings of the technology to cartoonish degree.

You’re wrong, you don’t know how the human brain produces subjective sensation.

I usually disagree with you about everything but I think you have a valid point here. This was an issue I studied very closely when I was in university, and you’re right, no one has the slightest clue how consciousness works. Saying “oh ChatGTP is just a statistical machine so it can’t be conscious” is like saying “oh the human brain is just a bunch of neural firings so it can’t produce consciousness”. In both cases, consciousness is not an obvious end result, but here we are.

That said, personally I don’t think ChatGTP is conscious, but it’s wrong for people to act like it being a philosophical zombie is obvious; the possibility of it being conscious is actually compatible with most nonreligious people’s belief systems already. Unfortunately the anti-AI hate on Lemmy won’t allow people to see the nuance on this discussion and they will interperet this as me somehow defending AI slop, which I am in no way trying to do.

The source of the whole problem is that OpenAI did something weird.

If OpenAI had said “It’s not conscious, it’s your p-zombie slave”, that would make perfect sense and the anti-ai crowd would be saying the opposite.

But instead, OpenAI said “It’s your personal conscious willing slave” and people instinctively started saying the exact opposite. It’s because there are science bros who hate OpenAI because they doubt the claims, and environmentalists and artists and socialists who hate it for the other reasons, and the various groups have allied over their hatred and adopted one another’s beliefs.

Now, I’m an environmentalist, an enjoyer of good art, a socialist, and a vegan. So I hate OpenAI over the established lines of all of those philosophies. But because the science bros complained louder earlier and have more social influence, they joined the AI hate community and spread their perspective first. And that results in people having no idea how to fit the vegan perspective into any of this.

TL;DR: People choose their beliefs according to political allegiance moreso than logic, and OpenAI chose its enemies in a weird way.

Yeah we do. With neurons and electrochemical signals. Seriously bro go get you some basic education.

Uhuh. Which “basic education” teaches you that? What is it specifically about “neurons and electrochemical signals” that causes them to result in consciousness

All of them. And none of it is relevant to computer programs which aren’t capable of consciousness in any way shape or form or of suffering or of intelligence in any way we would consider living. LLMs can never be genuine AI or AGI or whatever you want to call conscious intelligence. Until computers can fully simulate a near human equivalent brain and central nervous system (and we’ll have a very hard time ever building a powerful enough computer to do that) anthropomorphizing a fucking computer program in any way is fucking stupid. Maybe start with some proof or evidence of your position before saying stupid shit like “we shouldn’t use AI because it might suffer.” No it can’t, and it’s not AI, it’s a shitty predictive algorithm.

This is a very ignorant comment. Consciousness is legitimately the greatest unsolved problem in modern science and philosophy.

Not really. It’s an emergent property of our biological processes. It’s not some nebulous thing like you and grail seem to think. Everything that lives is self aware and has some degree of consciousness. Without mimicking any of the biological processes and functions that living things have there can be no functional consciousness that’s close enough to our understanding of consciousness to be relevant. You both sound like high schoolers that got high for the first time and had their very first deep thoughts that weren’t actually deep, just really really stupid.

It’s the astounding mix of complete ignorance and supreme arrogance that makes people like you so supremely loathsome

Oh fuck off grail’s alt.

With all due respect, you simply do not know what you are talking about. Here is some info on this topic if you are all interested in learning more

Ok great. Now, explain specifically and with examples how exactly any of that is relevant to LLMs.

You’re seriously saying you’ve solved the hard problem of consciousness, which has stumped philosophers and neuroscientists for thousands of years? You know how the brain creates consciousness?

Well then where’s your nobel prize, Einstein?

Holy shit seriously? Now you’re trying to bring philosophical rhetoric into a practical discussion? Go to fucking college kid. Jesus Christ you desperately need to learn how to learn. And yes, we know pretty well that consciousness is an emergent property of the sum total of our biological processes. It also may be entirely made up as a way our brains filter and process all the input of receives but that’s neither here nor there because I can’t wait to hear what’s next on your dip shit docket of misunderstanding.

Jesus Christ go back to reddit you insufferable fucking dork

Fuckin’ poetry

You’re the one who claimed you know how the brain creates experiences and you’re absolutely certain we can’t replicate that process with computers. You seemed so sure, five minutes ago.

It’s like hearing a kid say “I have absolutely no idea how a nuclear reactor functions, but I’m completely certain it has nothing to do with steam engines”

And fuckin hell there you go arguing with yourself again. Nobody said anything about being able to eventually replicate it with computers, it’s unlikely but maybe quantum computing could handle it, but regardless any existing tech in the AI space absolutely fucking without any shade of doubt is not remotely close to that. Like fucking at all. And steam turbines don’t have shit to do with the reactor itself, they’re for generating power from the reactor, that’s a stupid attempt at a gotcha and you just keep proving I’m dealing with someone with only primary education at best.

No, that’s what you read because your reading comprehension matches your understanding of the other subjects we’ve discussed. Go to fucking school, Jesus Christ. Get a legitimate education instead of just making shit up in your head and assuming that’s correct.

You’re really bad at arguing, you just say “nuh uh” and berate Me for not agreeing with you. It’s like talking to a man from the 1950s.

For the umpteenth time, I’m not arguing, I’m telling you that you fundamentally do not understand any of the things you’re talking about. I do not need to rebut nonsensical bullshit.