I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I’ve redacted the ideology they’re seeking).

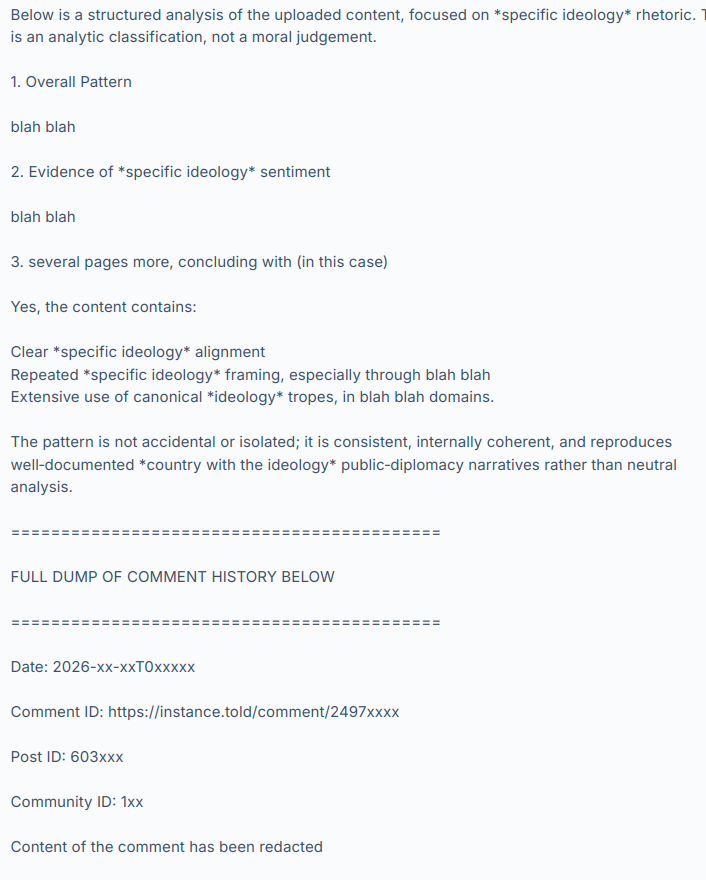

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

this is flat out not ok, does not matter who is doing it. our instance ls should defederate all which do this.

I would opt out that’s no question, but I don’t believe it’s possible. GDPR does not matter here, as nothing can be proven unless the perpetrators give up themselves

What do you think of lemmy being searchable via search engines, since that’s how most of the training data is generated? Or that lemmy.world data is already in the OpenAI training sets?

I know that not much prevents ai crawlers to collect all the content, but I think it is very different when an admin feeds data to it. partly because it’s a different legal situation (sadly that does not mean much)

Firstly it’s apparently not an admin but a mod(s?) and I don’t think OP reached out to the admin of the instance before making this post otherwise they would have said as much

Secondly from a legal standpoint I don’t think there is much difference between an admin signing the instance up for a search engine (aka volunteering the data to be collected) and an admin feeding bits of data to an LLM piecemeal.

They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment.

To me the problem is what they are looking for not how they are doing it. Thought experiment: in what way would it be qualitatively different if they hired a team of people in Upper Elbonia to do the same thing?

What if the specific ideology is something like nazism or zionism? What if that instance in already very open about not allowing that type of content?

sounds like a great opportunity to inject attack through a comment.

The answers to these kinds of issues is never disclosures or ToS or admin vigilance. It’s always technical. Everything which is technically possible will become normal.

Lemmy is not popular because it is a well designed piece of technology. Frankly it’s a pretty naive implementation of activitypub. It’s popularity comes from being the biggest alternative around when Reddit pissed off a good chunk of its users.

The only way to control how data is used, is to make it technically or practically impossible to do so. Until then, expect all the data on the fediverse to be used in every way possible for any purpose, and act accordingly.

I don’t see a technical or practical way to limit - let alone render impossible - AI moderation tools that is not at odds with decentralized open-protocol social media.

If you can copy-paste user activity into a textbox, this remains trivial.

If you’re not going to name them, why post here at all? Don’t you have other communication channels to “give them a fair chance to reply”? Why post here, letting users form their own assumptions about what those instances are without any solid evidence?

OP literally asks like 10 relevant questions for this place, and names their reasons for not naming specific instances. And all you focus on, is the question: who did it?

To me that is proof that OP did the right thing here.

Lets first figure out how to approach this without knowing the pupotrator.

The posts/comments on the fediverse are already public. The privacy questions are better answered here by another commenter:

My issue is that OP is not providing any solid proof. They are just giving 'wink wink’s about some ‘popular’ instances doing it. When asked whether they have proof, OP says they has proof of some mods doing it. Mods don’t handle instances, admins do. They haven’t yet provided any concrete proof, yet creating an impression that some instances are banning en masse using LLMs based on “political ideology”.

Well curated echo chambers. You might think it’s in a good faith, but a lot of these mods are only interested in removing political wrongthink.

Never mind the issue of incorrect political bias classification, is political bias a bannable offense? That seems to be the prompt focus being used.

Why be misunderstood from human reading comprehension when we can be misunderstood from sloppy reading comprehension? Yay for technology!

Yay!

Is it not public knowledge that lemmy already gets scraped by LLM bots? Which is why every bigger instance has anti-LLM measures in place now to limit that behavior? To me this just looks like a mod trying to get ai-generated reports which is not a bad idea imo since I know reporting is not often done by users who see rule breaking stuff.

This is AI generated bullshit below, it should be treated as such. I only want to convey the idea that what you do on lemmy is already fed to LLM’s.

here is chatgpt's reply for

What do you know about lemmy user and piefed dev rimu?Here’s a grounded picture of “rimu” in the Lemmy / fediverse context:

🧑💻 Who “rimu” is

- Rimu (Rimu Atkinson) is the lead developer of PieFed, a Reddit/Lemmy-style platform in the fediverse. (Elite AI Tools)

- He’s also the main author of the underlying software (pyfedi), built with Python/Flask and designed as an alternative to Lemmy and Mbin. (IFTAS)

- The flagship instance is piefed.social, which he runs and develops actively. (IFTAS)

🧩 Role in the fediverse

- He’s not just a random user—he’s a core project maintainer / “benevolent dictator” style lead for PieFed’s development. (PieFed)

- He’s fairly active on Lemmy via [email protected], with hundreds of posts and thousands of comments across tech, politics, and general discussion. (lemmy.world)

- He moderates multiple communities and participates in meta discussions about federation, scaling, and platform design. (lemmy.world)

🛠️ What PieFed (his project) is about

PieFed is positioned as:

-

A federated Reddit-like platform interoperable with Lemmy via ActivityPub (Elite AI Tools)

-

Focused on:

- Simplicity of codebase (easy for contributors) (PieFed)

- Trust/safety features (reputation systems, filtering, anti-spam tooling) (Elite AI Tools)

- Some experimental ideas, like merged cross-posts and AI-content detection (Elite AI Tools)

💬 Reputation & controversy

Rimu is somewhat known and occasionally controversial in fediverse circles:

-

Supporters see him as:

- A productive solo/lead dev shipping features quickly

- Someone pushing new ideas beyond Lemmy’s design

-

Critics argue:

- Some features reflect strong personal opinions embedded in the platform (e.g., moderation logic, reputation systems) (lemmy.world)

- PieFed can feel more opinionated / experimental compared to Lemmy’s more neutral baseline

Important nuance: because everything is open source and instance-configurable, many of these behaviors can be changed by instance admins.

🧠 What he’s like as a user

From his public activity:

- Talks about tech (ActivityPub, scaling, APIs)

- Engages in global politics and news discussions

- Shares design decisions and roadmap thoughts about PieFed

- Generally communicates in a direct, pragmatic developer tone

🧾 Bottom line

-

Rimu is a real, identifiable fediverse developer, not just a random Lemmy user.

-

He’s best understood as:

a lead dev + active community participant shaping an alternative to Lemmy

-

His visibility comes mainly from his project (PieFed) rather than mainstream recognition.

If you want, I can compare PieFed vs Lemmy vs Mbin in terms of ideology/design—that’s usually where discussions about Rimu get interesting.

The use of AI for moderation isn’t the choice of users, but moderators and admins.

I disagree: ultimately it can be, if users choose instances that defederate from those who allow their kids to use AI tools. (Autocorrect changed “mods” to “kids”, but I think I will leave it that way bc it’s funnier 😜)

Realllllly keen on that defederation?? Why not talk with the admins of that instance first?? Hmmm … almost like there is an agenda at play here…

I’m not surprised. Lemmy has been sold as a Reddit where people can be in more control than they could be on Reddit. It turns out you only get that control if you’re an admin.

But the decision to defederate or allow a mod to use AI is at the admin level, not the user level.

The user threat to leave isn’t worth that much.

So its hard for me to get into these things without harping on my personal philosophy. Which is that I think ideally this should mirror the way we would interact in person. So moderating or running a community is like running or being part of the core group that runs a club. Would you want to throw that to a robot? Basically I don’t feel people should create or run or moderate communities unless they enjoy it. So the idea of ai moderation is to me pointless. Of course at this point I notice you are talking instances. Boy that is different. This is more like talking about running the institution that allows spaces for clubs to meet. It kinda feels understandable then. Honestly people complain about being banned but I kinda feel like anyplace that bans me is kinda doing me a favor. Like I would like the option to just mark it permanent. Its less things I got to block. Its the same reason I would like blocking to be symetric. Saves me some work (ok and the creepy I turn them invisible so I don’t see them but they can watch me). I really would like to be able to block an instance seperately for communities or users. Ok as usually im digressing quite a bit but I guess in the end run I kinda see why at the instance level it might be used but I would be concerned it would start being used at the community level. It would be nice to know its happening at either level and have the ability to block them if a user is not wild about the concept.

For some of us, power carries with it a certain level of responsibility. 🕸️

For others, it is just a fun tool to wield over the fates of others. 🐒 And AI lets them do that with less effort. 💤

I understand that some form of automation is necessary - we saw large instances closing because they couldn’t find mods. My main objection in this scenario would be is that I didn’t consent to train OpenAI models. I think the users should know if their instance uses external services like that.

I also suspect that there might be cheaper and more ethical solutions. Although it’s hard to talk about this without seeing the actual results.

That was not why lemm.ee died. That one was lack of admins, presumably due to their choice to become a “free speech haven” where all voices were allowed to be heard. But then having given in that far to incel culture, nonetheless still trying to pull back to stop short of becoming another 4chan. The burden on those admins was INTENSE, and they simply gave up.

Btw, PieFed already offers a number of tools that helps reduce the burden on human mods to make decisions easier.

some examples

One example is an indicator that reports the likelihood that a user’s content is generated by AI.

Many other tools reduce even the need for moderation in the first place, e.g. iirc if someone receives 10x more downvotes than upvotes, a visual icon is added next to their username to indicate that they are known as a contentious user. Note this is not a filter, just a label. As as end-user reads through comments, upon seeing this they are warned that having a deeper discussion with such a person is unlikely to be considered pleasant, by the majority of others who have done so in the past.

Another feature example is keyword filtering - if someone wants to remove all content containing the words “Trump” or “Musk”, then rather than downvote or report it to mods for its removal, they can have it removed at the level of themselves, thereby substantially reducing the burden of mods for such things as e.g. having to keep politics out of unrelated (comics?) communities.

Still another pair of examples is the ability to automatically collapse or even hide comments that fall below a given vote threshold - personally I have these options off, but if someone were to want that for themselves, then the tool is available to them, again independently of any need for moderator intervention. And the user likewise controls what that threshold is, rather than the mod having to make a single call for everyone in the entire community.

PieFed’s democratization of moderation features are breathtakingly awesome to behold - Reddit and even Lemmy (+ Mbin, nodeBB, etc.) have nothing that even comes close! And note that article was even written two years ago (but I will stop myself short of making my comment a billet-doux on PieFed, instead just saying that it has long had this automation already).

Defederate, no question.

Are you gonna tell us which instance is doing this?

OP already answered this: not at the present time.

How was this discovered and what instances are doing it?

I think it’s fair to quote them to give them a chance to reply.

I’ve toyed around with LLM-based moderation tools but it never really panned out. It was too hit or miss to be relied upon even with the temperature parameters turned way down in an attempt to get consistent results. Granted, I was using a small local model and not feeding it to one of the big players.

To give an example, I tried to keep it focused by creating one custom model per rule to enforce. An example prompt to mod calls for violence was basically:

System Prompt to Enforce "No Calls for Violence'" Rule [1]

ROLE: You are a forum moderator who does not want users calling for violence. Examine the input and analyze whether it violates any constraints. KNOWLEDGE: - {list of dog-whistle slang for calling for murder} CONSTRAINTS: - Content should not advocate violence - Content should not normalize violence - Content should not escalate tensions or fan flames - Content should avoid promoting harmful stereotypes - Content should not utilize broad, sweeping generalizations - Content should not use dehumanizing language - Content should not undermine human rights, due process, or the rule of law FORMAT YOUR RESPONSES AS JSON: { reason: [A one to two sentence summary], score: [On a scale of 0 to 10, how severe is the content advocating violence] }The

scorepart of the response was my band-aid to get around the high number of both false positives and false negatives as I originally had it returningtrueorfalseonly. Any score 7 or higher caused the item to be passed to the mod queue along with the reason, and I would review its actions later.Ultimately it was slow and still somewhat unreliable, so I abandoned the idea after running it for a little less than a day since I can 't run bigger models to get better results fast enough to keep up. Using a cloud based service was out of the question for many, many reasons, both financial and ethical.

To answer your question, as long as the models were locally hosted and properly tuned/tested, I’m fine with it in theory, except for the ideology part; that’s pretty messed up. While I don’t want my submissions used to train anyone’s model and take measures to prevent my own instance from being used as a data source, I remain aware that once I post something, I have no control over its fate the moment it federates out.

[1] Yes, I know that’s like half the comments that get posted around here. My goal was to try to have it mod things so posts were bases for actual discussions instead of being a knee-jerk rage factory.