The research from Purdue University, first spotted by news outlet Futurism, was presented earlier this month at the Computer-Human Interaction Conference in Hawaii and looked at 517 programming questions on Stack Overflow that were then fed to ChatGPT.

“Our analysis shows that 52% of ChatGPT answers contain incorrect information and 77% are verbose,” the new study explained. “Nonetheless, our user study participants still preferred ChatGPT answers 35% of the time due to their comprehensiveness and well-articulated language style.”

Disturbingly, programmers in the study didn’t always catch the mistakes being produced by the AI chatbot.

“However, they also overlooked the misinformation in the ChatGPT answers 39% of the time,” according to the study. “This implies the need to counter misinformation in ChatGPT answers to programming questions and raise awareness of the risks associated with seemingly correct answers.”

Who would have thought that an artificial intelligence trained on human intelligence would be just as dumb

Hm. This is what I got.

I think about 90% of the screenshots we see of LLMs failing hilariously are doctored. Lemmy users really want to believe it’s that bad through.

Edit:

I’ve had lots of great experiences with ChatGPT, and I’ve also had it hallucinate things.

I saw someone post an image of a simplified riddle, where ChatGPT tried to solve it as if it were the entire riddle, but it added extra restrictions and have a confusing response. I tried it for myself and got an even better answer.

Prompt (no prior context except saying I have a riddle for it):

A man and a goat are on one side of the river. They have a boat. How can they go across?

Response:

The man takes the goat across the river first, then he returns alone and takes the boat across again. Finally, he brings the goat’s friend, Mr. Cabbage, across the river.

I wish I was witty enough to make this up.

I reproduced that one and so I believe that one is true.

I looked up the whole riddle and see how it got confused.

It happened on 3.5 but not 4.

Interesting! What did 4 say?

Evidently I didn’t save the conversation but I went ahead and entered the exact prompt above into GPT-4. It responded with:

The man can take the goat across the river in the boat. After reaching the other side, he can leave the goat and return alone to the starting side if needed. This solution assumes the boat is capable of carrying at least the man and the goat at the same time. If there are no further constraints like a need to transport additional items or animals, this straightforward approach should work just fine!

Thanks for sharing!

Yesterday, someone posted a doctored one on here saying everyone eats it up even if you use a ridiculous font in your poorly doctored photo. People who want to believe are quite easy to fool.

Or you missed the point that this was a joke?

Holy fuck did it just pass the Turing test?

I actually had the opposite the other day where the code only broke on my machine…

In the end I just commented it out. I don’t see why everybody else should have working code and not me.

“Major new Technology still in Infancy Needs Improvements”

– headline every fucking day

“Corporation using immature technology in productions because it’s cool”

More news at eleven

This is scary because up to now, all software released worked exactly as intended so we need to be extra special careful here.

Yes, and we never have and never will put lives in the hands of software developers before!

Tap for spoiler

/s…for this comment and the above one, for anyone who needs it

unready technology that spews dangerous misinformation in the most convincing way possible is being massively promoted

Yeah, because no human would convincingly lie on the internet. Right, Arthur?

It’s literally built on what confidently incorrect people put on the internet. The only difference is that there are constant disclaimers on it saying it may give incorrect information.

Anyone too stupid to understand how to use it is too stupid to use the internet safely anyways. Or even books for that matter.

Holy mother of false equivalence. Google is not supposed to be a random dude on the Internet, it’s supposed to be a reference tool, and for the most part it was a good one before they started enshittifying it.

Google is a search engine. It points you to web pages that are made by people. Many times, the people who make those websites have put things on them that are knowingly or unknowingly incorrect but said in an authoritative manner. That was all I was saying, nothing controversial. That’s been a known fact for a long time. You can’t just read something on a single site and then be sure that it has to be true. I get that there are people who strangely fall in love with specific websites and think they are absolute truth, but thats always been a foolish way to use the internet.

A great example of people believing blindly is all these horribly doctored google ai images saying ridiculous things. There are so many idiots that think every time they see a screenshot of Google ai saying something absurd that it has to be true. People have even gone so far as to use ridiculous fonts just to point out how easy it is to get people to trust anything. Now there’s a bunch of idiots that think all 20 or so Google ai mistakes they’ve seen are all genuine, so much so that they think almost all Google ai responses are incorrect. Some people are very stupid. Sorry to break it to you, but LLMs are not the first thing to put incorrect information on the internet.

in Infancy Needs Improvements

I’m just gonna go out on a limb and say that if we have to invest in new energy sources just to make these tools functionably usable… maybe we’re better off just paying people to do these jobs instead of burning the planet to a rocky dead husk to achieve AI?

Just playing devil’s advocate here, but if we could get to a future with algorithms so good they are essentially a talking version of all human knowledge, this would be a great thing for humanity.

they are essentially a talking version of all human knowledge

“Obama is a Muslim”

this would be a great thing for humanity.

That’s easy to say. Tell me how. Also tell me how to do it without it being biased about certain subjects over others. Captain Beatty would wildly disagree with this even being possible. His whole shtick in Fahrenheit 451 is that all the books disagreed with one another, so that’s why they started burning them.

There’s this series of books called the www series, about AI before AI was the new hot thing every company needed to mention at least once to get stock price to go up.

Tap for spoiler

Essentially an AI popped up on the internet, which was able to read everything. Due to this it was able to combine data in such a way that it found things like a cure for cancer by combining research papers that no one had ever combined. This is a very bad explanation, but I could see how this makes sense.

Spoiler free explanation: no human has read everything, I think there could be big implications if there’s an AI that has that can see connections that no one ever has.

We already had that with search engines and the world wide web.

But let’s say some company did it, a perfect AI that has read everything and doesn’t hallucinate.

A researcher is working on some experiments, if they could just route it through the AI, and it would annalyse if that experiment was even possible, maybe already done, this could speed up research.

With a truly perfect model, which the tech bros are aiming for, I can see the potential for good. I ofcourse am skeptical such a model is possible, but… I kinda see why it would be nice to have.

The way I see it, we’re finally sliding down the trough of disillusionment.

I’m honestly a bit jealous of you. You are going to be so amazed when you realise this stuff is just barely getting started. It’s insane what people are already building with agents. Once this stuff gets mainstream, and specialized hardware hits the market, our current paradigm is going to seem like silent black and white films compared to what will be going on. By 2030 we will feel like 2020 was half a century ago at least.

Looking forward to it, but won’t be disappointed if it takes a bit longer than expected.

Ray Kurzweil has a phenomenal record of making predictions. He’s like 90% or something and has been saying AGI by 2029 for something like 30+ years. Last I heard, he is sticking with it, but he admits he may be a year or two off in either direction. AGI is a pretty broad term, but if you take it as “better than nearly every human in every field of expertise,” then I think 2029 is quite reasonable.

That’s not very far in the future, so it’s going to be really exciting to see how that works out.

Maybe only 51% of the code it writes needs to be good before it can self-improve. In which case, we’re nearly there!

We are already past that. The 48% is from a version of chatgpt(3.5) that came out a year ago, there has been lots of progress since then.

“Will this technology save us from ourselves, or are we just jerking off?”

You have no idea how many times I mentioned this observation from my own experience and people attacked me like I called their baby ugly

ChatGPT in its current form is good help, but nowhere ready to actually replace anyone

A lot of firms are trying to outsource their dev work overseas to communities of non-English speakers, and then handing the result off to a tiny support team.

ChatGPT lets the cheap low skill workers churn out miles of spaghetti code in short order, creating the illusion of efficiency for people who don’t know (or care) what they’re buying.

Yeap… Another brilliant short term strategy to catch a few eager fools that won’t last mid term

The compiler is such a racist boomer it won’t make my program.

GPT-2 came out a little more than 5 years ago, it answered 0% of questions accurately and couldn’t string a sentence together.

GPT-3 came out a little less than 4 years ago and was kind of a neat party trick, but I’m pretty sure answered ~0% of programming questions correctly.

GPT-4 came out a little less than 2 years ago and can answer 48% of programming questions accurately.

I’m not talking about mortality, or creativity, or good/bad for humanity, but if you don’t see a trajectory here, I don’t know what to tell you.

Seeing the trajectory is not ultimate answer to anything.

Perhaps there is some line between assuming infinite growth and declaring that this technology that is not quite good enough right now will therefore never be good enough?

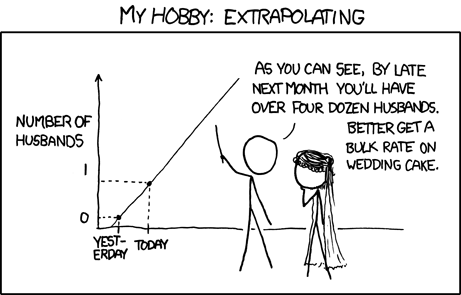

Blindly assuming no further technological advancements seems equally as foolish to me as assuming perpetual exponential growth. Ironically, our ability to extrapolate from limited information is a huge part of human intelligence that AI hasn’t solved yet.

will therefore never be good enough?

no one said that. but someone did try to reject the fact it is demonstrably bad right now, because “there is a trajectory”.

I appreciate the XKCD comic, but I think you’re exaggerating that other commenter’s intent.

The tech has been improving, and there’s no obvious reason to assume that we’ve reached the peak already. Nor is the other commenter saying we went from 0 to 1 and so now we’re going to see something 400x as good.

I think the one argument for the assumption that we’re near peak already is the entire issue of AI learning from AI input. I think numberphile discussed a maths paper that said that to achieve the accuracy that we want, there is simply not enough data to train it on.

That’s of course not to say that we can’t find alternative approaches

We’re close to peak using current NN architectures and methods. All this started with the discovery of transformer architecture in 2017. Advances in architecture and methods have been fairly small and incremental since then. The advancements in performance has mostly just been throwing more data and compute at the models, and diminishing returns have been observed. GPT-3 costed something like $15 million to train. GPT-4 is a little better and costed something like $100 million to train. If the next model costs $1 billion to train, it will likely be a little better.

I appreciate the XKCD comic, but I think you’re exaggerating that other commenter’s intent.

i don’t think so. the other commenter clearly rejects the critic(1) and implies that existence of upward trajectory means it will one day overcome the problem(2).

while (1) is well documented fact right now, (2) is just wishful thinking right now.

hence the comic, because “the trajectory” doesn’t really mean anything.

In general, “The technology is young and will get better with time” is not just a reasonable argument, but almost a consistent pattern. Note that XKCD’s example is about events, not technology. The comic would be relevant if someone were talking about events happening, or something like sales, but not about technology.

Here, I’m not saying that you’re necessarily right or they’re necessarily wrong, just that the comic you shared is not a good fit.

In general, “The technology is young and will get better with time” is not just a reasonable argument, but almost a consistent pattern. Note that XKCD’s example is about events, not technology.

yeah, no.

try to compare horse speed with ford t and blindly extrapolate that into the future. look at the moore’s law. technology does not just grow upwards if you give it enough time, most of it has some kind of limit.

and it is not out of realm of possibility that llms, having already stolen all of human knowledge from the internet, having found it is not enough and spewing out bullshit as a result of that monumental theft, have already reached it.

that may not be the case for every machine learning tool developed for some specific purpose, but blind assumption it will just grow indiscriminately, because “there is a trend”, is overly optimistic.

I don’t think continuing further would be fruitful. I imagine your stance is heavily influenced by your opposition to, or dislike of, AI/LLMs

oh sure. when someone says “you can’t just blindly extrapolate a curve”, there must be some conspiracy behind it, it absolutely cannot be because you can’t just blindly extrapolate a curve 😂

That comes off as disingenuous in this instance.

The study is using 3.5, not version 4.

4 produces inaccurate programming answers too

Obviously. But it is FAR better yet again.

Not really. I ask it questions all the time and it makes shit up.

Yes. But it is better than 3.5 without any doubt.

Speaking at a Bloomberg event on the sidelines of the World Economic Forum’s annual meeting in Davos, Altman said the silver lining is that more climate-friendly sources of energy, particularly nuclear fusion or cheaper solar power and storage, are the way forward for AI.

“There’s no way to get there without a breakthrough,” he said. “It motivates us to go invest more in fusion.”

It’s a good trajectory, but when you have people running these companies saying that we need “energy breakthroughs” to power something that gives more accurate answers in the face of a world that’s already experiencing serious issues arising from climate change…

It just seems foolhardy if we have to burn the planet down to get to 80% accuracy.

I’m glad Altman is at least promoting nuclear, but at the same time, he has his fingers deep in a nuclear energy company, so it’s not like this isn’t something he might be pushing because it benefits him directly. He’s not promoting nuclear because he cares about humanity, he’s promoting nuclear because has deep investment in nuclear energy. That seems like just one more capitalist trying to corner the market for themselves.

We are running these things on computers not designed for this. Right now, there are ASICs being built that are specifically designed for it, and traditionally, ASICs give about 5 orders of magnitude of efficiency gains.

Given the data points you made up, I feel it’s safe to assume that this plateau will now be a 10 year stretch

Lemmy seems to be very near-sighted when it comes to the exponential curve of AI progress, I think this is an effect because the community is very anti-corp

In what year do you estimating AI will have 90% accuracy?

No clue? Somewhere between a few years (assuming some unexpected breakthrough) or many decades? The consensus from experts (of which I am not) seems to be somewhere in the 2030s/40s for AGI. I’m guessing accuracy probably will be more on a topic by topic basis, LLMs might never even get there, or only related to things they’ve been heavily trained on. If predictive text doesn’t do it then I would be betting on whatever Yann LeCun is working on.

We only need to keep doing incremental improvements in the technology and avoid destroying ourselves in the meantime. That’s all it takes for us to find ourselves in the presence of superintelligent AI one day.

So this issue for me is this:

If these technologies still require large amounts of human intervention to make them usable then why are we expending so much energy on solutions that still require human intervention to make them usable?

Why not skip the burning the planet to a crisp for half-formed technology that can’t give consistent results and instead just pay people a living fucking wage to do the job in the first place?

Seriously, one of the biggest jokes in computer science is that debugging other people’s code gives you worse headaches than migraines.

So now we’re supposed to dump insane amounts of money and energy (as in burning fossil fuels and needing so much energy they’re pushing for a nuclear resurgence) into a tool that results in… having to debug other people’s code?

They’ve literally turned all of programming into the worst aspect of programming for barely any fucking improvement over just letting humans do it.

Why do we think it’s important to burn the planet to a crisp in pursuit of this when humans can already fucking make art and code? Especially when we still need humans to fix the fucking AIs work to make it functionally usable. That’s still a lot of fucking work expected of humans for a “tool” that’s demanding more energy sources than currently exists.

They don’t require as much human intervention to make the results usable as would be required if the tool didn’t exist at all.

Compilers produce machine code, but require human intervention to write the programs that they compile to machine code. Are compilers useless wastes of energy?

Compilers are deterministic and you can reason about how they came to their results, and because of that they are useful.

No, they’re useful because they produce useful machine code.

That’s a distinction without a difference. The code is useful because we can reason how it was made and we can then make deterministic changes. Try using a compiler that gives you a qualitatively different result each time it runs even though the inputs are the same.

It’s useful because it does the stuff we want it to do.

You’re focusing on a very high level philosophical meaning of “usefulness.” I’m focusing on what actually does what I need it to do.

I’m providing explicit examples of compilers doing “the stuff we want it to do”. LLMs do what the want 50% of the time and it still needs modifications afterwards. Imagine having to correct a compiler output and calling that compiler “useful”.

So if something isn’t perfect it’s not “useful?”

I use LLMs when programming. Despite their imperfection they save me an enormous amount of time. I can confidently confirm that LLMs are useful from personal direct experience.

I honestly don’t know how well AI is going to scale when it comes to power consumption vs performance. If it’s like most of the progress we’ve seen in hardware and software over the years, it could be very promising. On the other hand, past performance is no guarantee for future performance. And your concerns are quite valid. It uses an absurd amount of resources.

The usual AI squad may jump in here with their usual unbridled enthusiasm and copium that other jobs are under threat, but my job is safe, because I’m special.

Eye roll.

Meanwhile, thousands have been laid off already, and executives and shareholders are drooling at the possibility of thinning the workforce even more. Those who think AI will create as many jobs as it destroys are thinking wishfully. Assuming it scales well, it could spell massive layoffs. Some experts predict tens of millions of jobs lost to AI by 2030.

To try and answer the other part of your question…at my job (which is very technical and related to healthcare) we have found AI to be extremely useful. Using Google to search for answers to problems pales by comparison. AI has saved us a lot of time and effort. I can easily imagine us cutting staff eventually, and we’re a small shop.

The future will be a fascinating mix of good and bad when it comes to AI. Some things are quite predictable. Like the loss of creative jobs in art, music, animation, etc. And canned response type jobs like help desk chat, etc. The future of other things like software development, healthcare, accounting, and so on are a lot murkier. But no job (that isn’t very hands-on-physical) is 100% safe. Especially in sectors with high demand and low supply of workers. Some of these models understand incredibly complex things like drug interactions. It’s going to be a wild ride.

Counter arguments

- technology develops exponentially, while humans are … static

- even now single line of code LLM generates faster and cheaper

- the replacement is not programmer->LLM but programmer -> (programmer +LLM). LLM is just a tool.

technology develops exponentially, while humans are … static

I have yet to see a self-improving technology that does not require adaptive human intelligence as an input.

So refreshing when the voice of reason pops up in here. Thankyou!

Well fuckin’ said. Preach!

There is a good chance that it is instrumental in discoveries that lead to efficient clean energy. It’s not as if we were at some super clean, unabused planet before language models came along. We have needed help for quite some time. Almost nobody wants to change their own habits(meat, cars, planes, constant AC and heat…), so we need something. Maybe AI will help in this endevour like it has at so many other things.

There is a good chance that it is instrumental in discoveries that lead to efficient clean energy

There is exactly zero chance… LLMs don’t discover anything, they just remix already existing information. That is how it works.

This is a common misunderstanding of what it means to discover new things. New things are just remixing old things. For example, AI has discovered new matrix multiplications, protein foldings, drugs, chess/go/poker strategies, and much more that are all far superior to anything humans have ever come up with in these fields. In all these cases, the AI was just combining old things in new ways. Even Einstein was just combining old things into new ways. There is exactly zero chance that AI will all of a sudden quit making new discoveries all of a sudden.

Just a slight correction. ML/AI has aided in all sorts of discoveries, GenAI is a “remixing of existing concepts”. I don’t believe I’ve read, nor does the underlying principles really enable, anything regarding GenAI and discovering new ways to do things.

Yes, ML/AI has, you are correct. So far as the capabilities of GenAI goes, we have not even begun to scratch the surface of understanding how all the emergent abilities of GenAI are happening, and nobody has any idea where they will max out at. All we know is that it is finding some patterns that humans find over time as well as many patterns that humans have not been able to find. The chances that it continues to find more and more complex patterns that we have not found are much higher than the chances that we are currently at the max of its ability.

Maybe it won’t be transformers that leads to breakthroughs, it may be some completely different architecture such as Mamba/state space, but there is a good chance that transformers are a step in the direction of discovering something better.

It’s also “discovered” multitudes more that are complete nonsense.

Yeah, that’s the nature of discovery. Humans also “discovery” tons of things like chess strategies that are complete nonsense. Over time, we discard the most nonsense ones and keep the good ones as best as we can. It just turns out that this process is done way faster and efficiently by machines. That’s why nobody thinks humans are going to surpass AI at chess, go, poker, protein folding, matrix multiplation algorithm creation, and a whole bunch of other things.

Can you provide a source for the claim that all these discoveries are “far superior” than what humans have discovered? I struggle to see how a discovery can be ‘superior’- isn’t how the discovery is classified and dealt with, the crucial aspect?

I mean in these fields, it is superior. The greatest chess player is an AI. The greatest GO player is an AI. The greatest poker player… So far as Matrix multiplication goes, there are numerous examples of mathematicians being stuck at finding methods to do it at a certain level of efficiency and then having AI come through and finding more efficient ways to do it for given matrix sizes. Similar to this is drug creation and protein folding. The list goes on and on. I wasn’t comparing discoveries across fields, I’m just saying in clearly measurable specific fields, AI has objectively surpassed humans, and it has become pretty routine for this to be the case.

All these things I’ve mentioned are easily searchable, but if you still want sources after my clarification of my meaning let me know, and I’ll find some.

For example, AI has discovered

no, people have discovered. llms were just a tool used to manipulate large sets of data (instructed and trained by people for the specific task) which is something in which computers are obviously better than people. but same as we don’t say “keyboard made a discovery”, the llm didn’t make a discovery either.

that is just intentionally misleading, as is calling the technology “artificial intelligence”, because there is absolutely no intelligence whatsoever.

and comparing that to einstein is just laughable. einstein understood the broad context and principles and applied them creatively. llm doesn’t understand anything. it is more like a toddler watching its father shave and then moving a lego piece accross its face pretending to shave as well, without really understaning what is shaving.

I didn’t say LLMs made these discoveries. They didn’t. AI made those discoveries. Yes, it is true that humans made AI, so in a way, humans made the discoveries, but if that is your take, then it is impossible for AI to ever make any discovery. Really, if we take this way of thinking to its natural conclusion, then even humans can never make discoveries, only the universe can make discoveries, since humans are a result of the universe “universing”. It is arbitrary to try to credit humans with anything that happens further down their evolution.

Humans tried for a long time to get good at chess, and AI came along and made the absolute best chess players utterly irrelevant even if we give a team of the worlds best chessplayers an endless clock and thr AI a single minute for the entire game. That was 20 years ago. This is happening in more and more fields and showing no sign of stopping. We don’t know yet if discoveries will come from future LLMs like theybm have from other forms of AI, but we do know that with each generation more and more complex patterns are being identified and utilized by LLMs. 3 years ago the best LLMs would have scored single digits on IQ test, now they are triple digits, it is laughable to think that anyone knows where the current rapid trajectory will stop for this new technology, and much more laughable to think we are already at the end.

AI made those discoveries. Yes, it is true that humans made AI, so in a way, humans made the discoveries, but if that is your take, then it is impossible for AI to ever make any discovery.

if this is your take, then lot of keyboard made a lot of discovery.

AI could make a discovery if there was one (ai). there is none at the moment, and there won’t be any for any foreseeable future.

tool that can generate statistically probable text without really understanding meaning of the words is not an intelligence in any sense of the word.

your other examples, like playing chess, is just applying the computers to brute-force through specific mundane task, which is obviously something computers are good at and being used since we have them, but again, does not constitute a thinking, or intelligence, in any way.

it is laughable to think that anyone knows where the current rapid trajectory will stop for this new technology, and much more laughable to think we are already at the end.

it is also laughable to assume it will just continue indefinitely, because “there is a trajectory”. lot of technology have some kind of limit.

and just to clarify, i am not some anti-computer get back to trees type. i am eager to see what machine learning models will bring in the field of evidence based medicine, for example, which is something where humans notoriously suck. but i will still not call it “intelligence” or “thinking”, or “making a discovery”. i will call it synthetizing so much data that would be humanly impossible and finding a pattern in it, and i will consider it cool result, no matter what we call it.

if this is your take, then lot of keyboard made a lot of discovery.

This is literally my point. It is arbitrary to choose that all the good ideas came from “humans”. If we are going to give all credit for anything AI produces to humans, then it only seems fair to give all credit for human things to our common ancestors with chimpanzees, because if it were not for their clever ideas, we would never have been here. But wait, we can’t stop there, because we have to give credit to the original single-celled life forms, and eventually, back to the universe itself(like I mentioned before).

Look, I totally get the desire to want to glorify humans and think that we have something special that machines don’t/can’t have. It kinda sucks to think that we are not so special, and potentially extememly inferior to what is right around the corner. We can’t let that primal ego desire cloud our judgement, though. Our brains are physical machines doing calculations. There is not some magical difference between our calculations that make it so we can make discoveries and machines cannot.

Imagine you teach your little brother how to play chess, and then your brother thinks about it a bunch and comes up with a bunch of new strategies and starts to kick your butt every time, and eventually atatts crushing tournaments. Sure, you can cling to the fact that you taught him how to play, and you can go around telling everyone how “you” are winning all these tournaments because your brother is actually winning them, but it doesn’t change the fact that your brother is the one with the secret sauce that you simply are unable to comprehend.

Your whole point is that if people do it, then it is some special discovery thing, but if computers do it, then it is just computational brute force. There is actually no difference between the two, it is just two different ways of wording the same process. We made programs that could understand the rules, and then it went further and in the same direction that we were trying to go.

So far as continuing indefinitely because we are on a trajectory goes, sure, we will eventually hit some intelligence plateaus, but we are nowhere near this point. Why can I say this with such certainty? Because we have things that we know will work that we haven’t gotten around to combining yet. Some of this gets a bit technical, but a nice way to think of it is this. Right now, we are mainly using hardware designed to generate general graphics that we have hijacked to use for machine learning. The usual speedup when we go from using generalized hardware to specialized is about 5 orders of magnitude(10,000x). That kind of a gain has huge implications in the AI/ML world. This is just one out of many known improvements on the horizon, but it is one of the simplest to wrap your head around. I don’t know how familiar you are with things like crewAI or autogen, but they are phenomenal, they absolutely crush all of the greatest base LLMs, but they are still a bit slow due to how many LLM calls they take. When we have a 10,000x speedup(which is pretty much guarenteed), then everyone will be able to instantly use enormous agent frameworks like this in an instant.

I understand wanting to see humans as having a monopoly on “intelligence”, but quite frankly that era is coming to an end. It may be a bumpy ride, but the sooner humans learn to adjust to this new world, the better. I don’t think it is something that someone can really make someone else see, but once you do see it, it is very obvious. I suggest you check out the cutting-edge agent stuff out there and then imagine that the most impressive stuff will be routinely done from a single prompt in an instant. Then, on top of that, consider that the base LLMs that we have now are the worst there will ever be. We are in for a very wild ride.

It will just be exploited by megacorporations and distorted in unimaginable ways to push profit to new heights. Just like every glimmer of hope for the future.

Check out the open source AI world. There are incredible things happening, it isn’t all as doom and gloom as pesemistic losers want everyone to believe. The open source community is a thriving ecosystem. Linux is a product of the open source world, it is completely free for anyone to use. It is superior to anything that private corporations have ever created in many ways and this can be plainly seen in the fact that nearly all important computing networks are run on linux.

The only downside of Free Open Source Software is that it has been unintentionally the biggest transfer of wealth created by labor from volunteer labor to the capitalist class in history.

Way better software, so much so that capitalists use the hell out of it to make tons of money.

The main limiting factor of the open source AI world is hardware. Hard for individual enthusiasts to compete with corporations who have billions of GPUs worth of processing power. I just have one GPU, and its an AMD, so it’s even more limited because nVidia is the brand majorly used for AI projects.

The decentralized AI hardware movement is also rapidly growing to deal with this issue.

Linux is a product of the open source world

And has nothing to do with AI

Yeah, I was responding to someone saying that big corporations were going to take over AI, I was just pointing out that this isn’t a given since there are other massively successful tech projects that are open source community-driven projects. Sorry if I wasn’t clear enough.

Yeah it’s wrong a lot but as a developer, damn it’s useful. I use Gemini for asking questions and Copilot in my IDE personally, and it’s really good at doing mundane text editing bullshit quickly and writing boilerplate, which is a massive time saver. Gemini has at least pointed me in the right direction with quite obscure issues or helped pinpoint the cause of hidden bugs many times. I treat it like an intelligent rubber duck rather than expecting it to just solve everything for me outright.

I tend to agree, but I’ve found that most LLMs are worse than I am with regex, and that’s quite the achievement considering how bad I am with them.

Hey, at least we can rest easy knowing that human devs will be needed to write regex for quite a while longer.

… Wait, I’m horrible at Regex. Oh well.

Same here. It’s good for writing your basic unit tests, and the explain feature is useful getting for getting your head wrapped around complex syntax, especially as bad as searching for useful documentation has gotten on Google and ddg.

That’s a good way to use it. Like every technological evolution it comes with risks and downsides. But if you are aware of that and know how to use it, it can be a useful tool.

And as always, it only gets better over time. One day we will probably rely more heavily on such AI tools, so it’s a good idea to adapt quickly.

I will resort to ChatGPT for coding help every so often. I’m a fairly experienced programmer, so my questions usually tend to be somewhat complex. I’ve found that’s it’s extremely useful for those problems that fall into the category of “I could solve this myself in 2 hours, or I could ask AI to solve it for me in seconds.” Usually, I’ll get a working solution, but almost every single time, it’s not a good solution. It provides a great starting-off point to write my own code.

Some of the issues I’ve found (speaking as a C++ developer) are: Variables not declared “const,” extremely inefficient use of data structures, ignoring modern language features, ignoring parallelism, using an improper data type, etc.

ChatGPT is great for generating ideas, but it’s going to be a while before it can actually replace a human developer. Producing code that works isn’t hard; producing code that’s good requires experience.

This has been my experience as well. If you already know what you are doing, LLMs can be a great tool. If you are inexperienced, you cannot assess the quality nor the accuracy of the answers, and are using the LLM to replace your own learning.

I like to draw the parallel to people that have learnt to paint only using digital tools. They often show a particular colouring that shows a lack of understanding of colour theory. Because pipette tools mean that you never have to mix colours, you never have to learn to do so. Painting with physical paint isn’t superior, but it presents a hurdle (mixing paint) that is crucial to learn to overcome. Many digital-only artists will still have learnt on traditional media. Once you have the knowledge, the pipette and colour pickers are just a tool, no longer inhibiting anything.

My experience with an AI coding tool today.

Me: Can you optimize this method.

AI: Okay, here’s an optimized method.

Me seeing the AI completely removed a critical conditional check.

Me: Hey, you completely removed this check with variable xyz

Ai: oops you’re right, here you go I fixed it.

It did this 3 times on 3 different optimization requests.

It was 0 for 3

Although there was some good suggestions in the suggestions once you get past the blatant first error

Don’t mean to victim blame but i don’t understand why you would use ChatGPT for hard problems like optimization. And i say this as a heavy ChatGPT/Copilot user.

From my observation, the angle of LLMs on code is linked to the linguistic / syntactic aspects, not to the technical effects of it.

Because I had some methods I thought were too complex and I wanted to see what it’d come up with?

In one case part of the method was checking if a value was within one of 4 ranges and it just dropped 2 of the ranges in the output.

I don’t think that’s asking too much of it.

I don’t think that’s asking too much of it.

Apparently it was :D i mean the confines of the tool are very limited, despite what the Devin.ai cult would like to believe.

That’s been my experience with GPT - every answer Is a hallucination to some extent, so nearly every answer I receive is inaccurate in some ways. However, the same applies if I was asking a human colleague unfamiliar with a particular system to help me debug something - their answers will be quite inaccurate too, but I’m not expecting them to be accurate, just to have helpful suggestions of things to try.

I still prefer the human colleague in most situations, but if that’s not possible or convenient GPT sometimes at least gets me on the right path.

And ya, it did provide some useful info, so it’s not like it was all wrong.

I’m more just surprised that it was wrong in that way.

I’m curious about what percentage of programmers would give error free answers to these questions in seconds.

Probably less than the same amount of developers whose code runs on the first try.

My favorite is when I ask for something and it gets stuck in a loop, pasting the same comment over and over

ChatGPT and github copilot are great tools, but they’re like a chainsaw: if you apply them incorrectly or become too casual and careless with them, they will kickback at you and fuck your day up.

What drives me crazy about its programming responses is how awful the html it suggests is. Vast majority of its answers are inaccessible. If anything, a LLM should be able to process and reconcile the correct choices for semantic html better than a human… but it doesnt because its not trained on WIA-ARIA… its trained on random reddit and stack overflow results and packages those up in nice sounding words. And its not entirely that the training data wants to be inaccessible… a lot of it is just example code wothout any intent to be accessible anyway. Which is the problem. LLM’s dont know what the context is for something presented as a minimal example vs something presented as an ideal solution, at least, not without careful training. These generalized models dont spend a lot of time on the tuned training for a particular task because that would counteract the “generalized” capabilities.

Sure, its annoying if it doesnt give a fully formed solution of some python or js or whatever to perform a task. Sometimes it’ll go way overboard (it loves to tell you to extend js object methods with slight tweaks, rather than use built in methods, for instance, which is a really bad practice but will get the job done)

We already have a massive issue with inaccessible web sites and this tech is just pushing a bunch of people who may already be unaware of accessible html best practices to write even more inaccessible html, confidently.

But hey, thats what capitalism is good for right? Making money on half-baked promises and screwing over the disabled. they arent profitable, anyway.

If you don’t know what you are doing, and you give it a vague request hoping it will automatically solve your problem, then you will just have to spend even more time to debug its given code.

However, if you know exactly what needs do do, and give it a good prompt, then it will reward you with a very well written code, clean implementation and comments. Consider it an intern or junior developer.

Example of bad prompt: My code won’t work [paste the code], I keep having this error [paste the error log], please help me

Example of (reasonably) good prompt: This code introduces deep recursion and can sometimes cause a “maximum stack size exceeded” error in certain cases. Please help me convert it to use a

whileloop instead.I wouldn’t trust an LLM to produce any kind of programming answer. If you’re skilled enough to know it’s wrong, then you should do it yourself, if you’re not, then you shouldn’t be using it.

I’ve seen plenty of examples of specific, clear, simple prompts that an LLM absolutely butchered by using libraries, functions, classes, and APIs that don’t exist. Likewise with code analysis where it invented bugs that literally did not exist in the actual code.

LLMs don’t have a holistic understanding of anything—they’re your non-programming, but over-confident, friend that’s trying to convey the results of a Google search on low-level memory management in C++.

If you’re skilled enough to know it’s wrong, then you should do it yourself, if you’re not, then you shouldn’t be using it.

Oh I strongly disagree. I’ve been building software for 30 years. I use copilot in vscode and it writes so much of the tedious code and comments for me. Really saves me a lot of time, allowing me to spend more time on the complicated bits.

I’m closing in on 30 years too, started just around '95, and I have yet to see an LLM spit out anything useful that I would actually feel comfortable committing to a project. Usually you end up having to spend as much time—if not more—double-checking and correcting the LLM’s output as you would writing the code yourself. (Full disclosure: I haven’t tried Copilot, so it’s possible that it’s different from Bard/Gemini, ChatGPT and what-have-you, but I’d be surprised if it was that different.)

Here’s a good example of how an LLM doesn’t really understand code in context and thus finds a “bug” that’s literally mitigated in the line before the one where it spots the potential bug: https://daniel.haxx.se/blog/2024/01/02/the-i-in-llm-stands-for-intelligence/ (see “Exhibit B”, which links to: https://hackerone.com/reports/2298307, which is the actual HackerOne report).

LLMs don’t understand code. It’s literally your “helpful”, non-programmer friend—on stereoids—cobbling together bits and pieces from searches on SO, Reddit, DevShed, etc. and hoping the answer will make you impressed with him. Reading the study from TFA (https://dl.acm.org/doi/pdf/10.1145/3613904.3642596, §§5.1-5.2 in particular) only cements this position further for me.

And that’s not even touching upon the other issues (like copyright, licensing, etc.) with LLM-generated code that led to NetBSD simply forbidding it in their commit guidelines: https://mastodon.sdf.org/@netbsd/112446618914747900

Edit: Spelling

I’m very familiar with what LLMs do.

You’re misunderstanding what copilot does. It just completes a line or section of code. It doesn’t answer questions - it just continues a pattern. Sometimes quite intelligently.

Shoot me a message on discord and I’ll do a screenshare for you. #locuester

It has improved my quality and speed significantly. More so than any other feature since intellisense was introduced (which many back then also frowned upon).

Fair enough, and thanks for the offer. I found a demo on YouTube. It does indeed look a lot more reasonable than having an LLM actually write the code.

I’m one of the people that don’t use IntelliSense, so it’s probably not for me, but I can definitely see why people find that particular implementation useful. Thanks for catching and correcting my misunderstanding. :)

APIs that don’t exist

I had that. I got a bunch of ok code for an AWS API, but then it decided to hallucinate a method. I tried all kind of prompt to instruct it that the method didn’t exist and not to use it, but it always came back telling me it was the right way to do it.

Anyway, still faster than reading the doc for a one off script I just wanted thrown together quickly and never to be reused again.

Example of (reasonably) good prompt: This code introduces deep recursion and can sometimes cause a “maximum stack size exceeded” error in certain cases. Please help me convert it to use a

whileloop instead.That sounds like those cases on YouTube where the correction to the code was shorter than the prompt hahaha

I’ve found chatgpt reasonably good for one thing: Generating regex-patterns. I don’t know regex for shit, but if I ask for a pattern described with words, I get a working pattern 9/10 times. It’s also a very easy use-case to double check.

I just use it to get ideas about how to do something or ask it to write short functions for stuff i wouldnt know that well. I tried using it to create graphical ui for script but that was constant struggle to keep it on track. It managed to create something that kind of worked but it was like trying to hold 2 magnets of opposing polarity together and I had to constantly reset the conversation after it got “corrupted”.

Its useful tool if you dont rely on it, use it correctly and dont trust it too much.

This has been true for code you pull from posts on stackoverflow since forever. There are some good ideas, but they a. Aren’t exactly what you are trying to solve and b. Some of the ideas are incomplete or just bad and it is up to you to sort the wheat from the chaff.

Yeah I’ve been trying to recreate the same gui tools with every version and it is getting much better but it still struggles. The python specific gpt actually manages to create what I ask for and can make changes once it’s got the base established, I have to correct a few little glitches but nothing too terrible.

For functions like save all the info in text boxes to Json and fill that info back in when load is pressed it never fails at. Making little test scripts for functions or layouts it saves me huge amounts of mental effort.

It’s like image gen, you have to know what to expect to get the most out of it, ask for something it finds difficult it’s easy to confuse it but ask for things it’s good at and it’ll amaze you.

I couldn’t have said it better

I guess it depends on the programming language… With python, I got very fast great results. But python is all about quick and dirty 😂

I asked ChatGPT for assistance with JavaScript doing HL7 stuff and it was a joke… After the seventh correction I gave up on it (at least for that task)

In Rust, it’s not great. It can’t do proper memory management in the language, which is pretty essential.

Well, if you use free chatGPT you only have knowledge until 2022, maybe that’s the reason

People down vote me when I point this out in response to “AI will take our jobs” doomerism.

I mean, AI eventually will take our jobs, and with any luck it’ll be a good thing when that happens. Just because Chat GPT v3 (or w/e) isn’t up to the task doesn’t mean v12 won’t be.

I’m not so sure about the “it’ll be good” part. I’d like to imagine a world where people don’t have to work because everything is done by robots but in reality you’ll have some companies that will make trillions while everyone else will go hungry and become poor and homeless.

Yes, that’s exactly the scenario we need to avoid. Automated gay space communism would be ideal, but social democracy might do in a pinch. A sufficiently well-designed tax system coupled with a robust welfare system should make the transition survivable, but the danger with making that our goal is allowing the private firms enough political power that they can reverse the changes.

Yes, this is also true. I see things like UBI as an inevitable necessity, because AI and automation in general will eliminate the need for most companies to employ humans. Our capitalistic system is set up in a way such that a person can sell their ability to work and provide value to the owner class, but if that dynamic is ever challenged on a fundamental level, it will violently collapse when people who can’t get jobs because a robot replaced them either reject automation to preserve the status quo or embrace a new dynamic that provides for the population’s basic needs without requiring them to be productive.

But the way that managers talk about AI makes it sound like the techbros have convinced everybody that AI is far more powerful than it currently is, which is a glorified chatbot with access to unfiltered Google search results.

It could be good thing, but price for that is making being unemployed okay.

This begs some reflection. what is a"job", functionally? What would be needed for losing it to be good?

I suspect a system with jobs would not eradicate jobs, just change them.

If it’s possible for AI to reach that level. We shouldn’t take for granted it’s possible.

I was really humbled when I learned that a cubic mm of human brain matter took over a petabyte to map. It suggests to me that AI is nowhere close to the level you’re describing.

It suggests to me that AI

This is a fallacy. Specifically, I think you’re committing the informal fallacy confusion of necessary and sufficient conditions. That is to say, we know that if we can reliably simulate a human brain, then we can make an artificial sophont (this is true by mere definition). However, we have no idea what the minimum hardware requirements are for a sufficiently optimized program that runs a sapient mind. Note: I am setting aside what the definition of sapience is, because if you ask 2 different people you’ll get 20 different answers.

We shouldn’t take for granted it’s possible.

I’m pulling from a couple decades of philosophy and conservative estimates of the upper limits of what’s possible as well as some decently-founded plans on how it’s achievable. Suffice it to say, after immersing myself in these discussions for as long as I have I’m pretty thoroughly convinced that AI is not only possible but likely.

The canonical argument goes something like this: if brains are magic, we cannot say if humanlike AI is possible. If brains are not magic, then we know that natural processes can create sapience. Since natural processes can create sapience, it is extraordinarily unlikely that it will prove impossible to create it artificially.

So with our main premise (AI is possible) cogently established, we need to ask the question: “since it’s possible, will it be done, and if not why?” There are a great many advantages to AI, and while there are many risks, the barrier of entry for making progress is shockingly low. We are talking about the potential to create an artificial god with all the wonders and dangers that implies. It’s like a nuclear weapon if you didn’t need to source the uranium; everyone wants to have one, and no one wants their enemy to decide what it gets used for. So everyone has the insensitive to build it (it’s really useful) and everyone has a very powerful disincentive to forbidding the research (there’s no way to stop everyone who wants to, and so the people who’d listen are the people who would make an AI who’ll probably be friendly). So what possible scenario do we have that would mean strong general AI (let alone the simpler things that’d replace everyone’s jobs) never gets developed? The answers range from total societal collapse to extinction, which are all worse than a bad transition to full automation.

So either AI steals everyone’s job or something worse happens.

Thanks for the detailed and thought provoking response. I stand corrected. I appreciate the depth you went into!

You’re welcome! I’m always happy to learn someone re-evaluated their position in light of new information that I provided. 🙂

Even if AI is able to answer all questions 100% accurately, it wouldn’t mean much either way. Most of programming is making adjustments to old code while ensuring nothing breaks. Gonna be a while before AI will be able to do that reliably.

I always thought of it as a tool to write boilerplate faster, so no surprises for me